The "Chatbox" is Just the Interface: Meet the AI Harness

100% user image (cleaned up by AI). 100% human vision. 50% AI written. Nerd level: 4.5/10

If you’ve used ChatGPT, Perplexity, Claude, or Gemini, you’ve interacted with a Large Language Model (LLM). But for most people, the experience begins and ends with that little text box at the bottom of the screen.

To move from “playing with AI” to “building with AI,” you need to understand that the LLM is just the engine. To make it actually useful for real-world work, we wrap it in what I call an AI Harness.

The Anatomy of the Harness

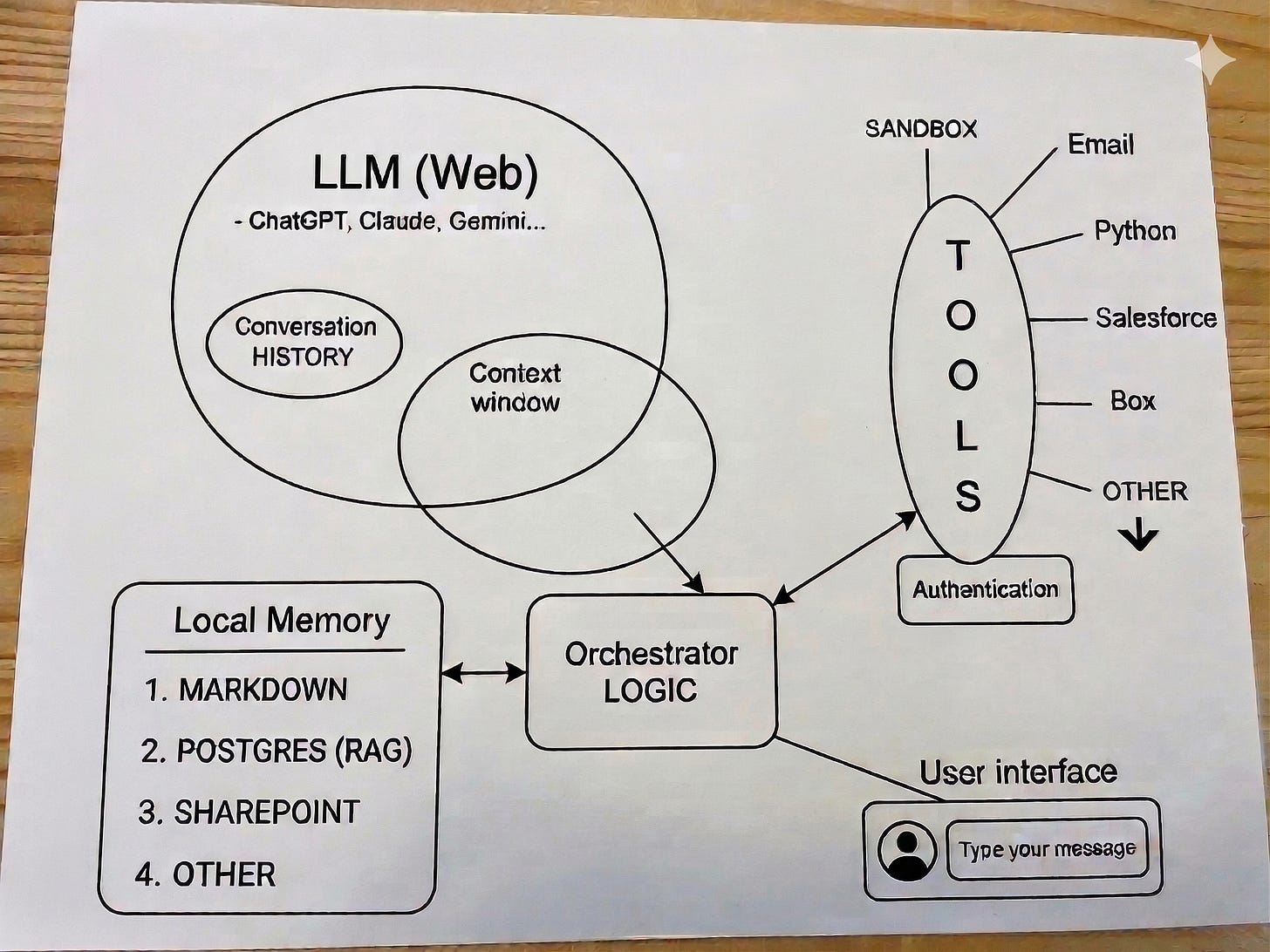

Take a look at the diagram above. I’ll be the first to admit: this isn’t a perfect technical schematic. In the real world, the “plumbing” is a lot messier, and lines overlap in ways that would make a systems architect sweat. But for our purposes, it’s exactly the right level of “good enough” to show how the pieces fit together.

Notice how the LLM (the big circle) is actually isolated? On its own, it only knows what it was trained on months or years ago. The Harness is the infrastructure we build around it to give it “hands” and a “memory.”

The Orchestrator Logic: This is the brain of the operation. It sits between you and the LLM, deciding how to handle your request. It’s the traffic controller.

Local Memory: This is your proprietary data—Markdown files, PDFs in SharePoint, or a Postgres database (RAG). The Harness pulls this info in so the LLM can talk about your specific business, not just general internet knowledge.

The Context Window: Think of this as the LLM’s short-term “working memory.” The Harness feeds specific bits of your Local Memory into this window so the LLM has the right context for the task at hand.

Tools: This is where it gets powerful. Through the Harness, the AI can actually do things—check your Email, run a Python script, or update a record in Salesforce.

The Takeaway: When you type a message, you aren’t just talking to a model; you are triggering an orchestrated flow of data and tools. We aren’t just “chatting” anymore—we’re managing intent through a structured harness.